How accurate are modern Environment & Ecology monitoring systems?

Author

Date Published

Reading Time

How accurate are modern Environment & Ecology monitoring systems in real industrial settings? For technical evaluators, accuracy is no longer just a specification on paper—it determines compliance, risk control, and operational confidence. This article examines the sensors, calibration methods, data integrity safeguards, and environmental variables that shape measurement reliability, helping decision-makers assess which systems can truly deliver dependable performance.

In industrial procurement and technical assessment, measurement accuracy must be judged in context. A system that delivers ±1% under laboratory conditions may drift significantly in coastal humidity, high-vibration process areas, or remote outdoor stations exposed to dust, temperature cycling, and unstable power. For EPC teams, facility managers, and procurement directors, the practical question is not whether a monitoring system is advanced, but whether it remains trustworthy over 12, 24, or 36 months of field operation.

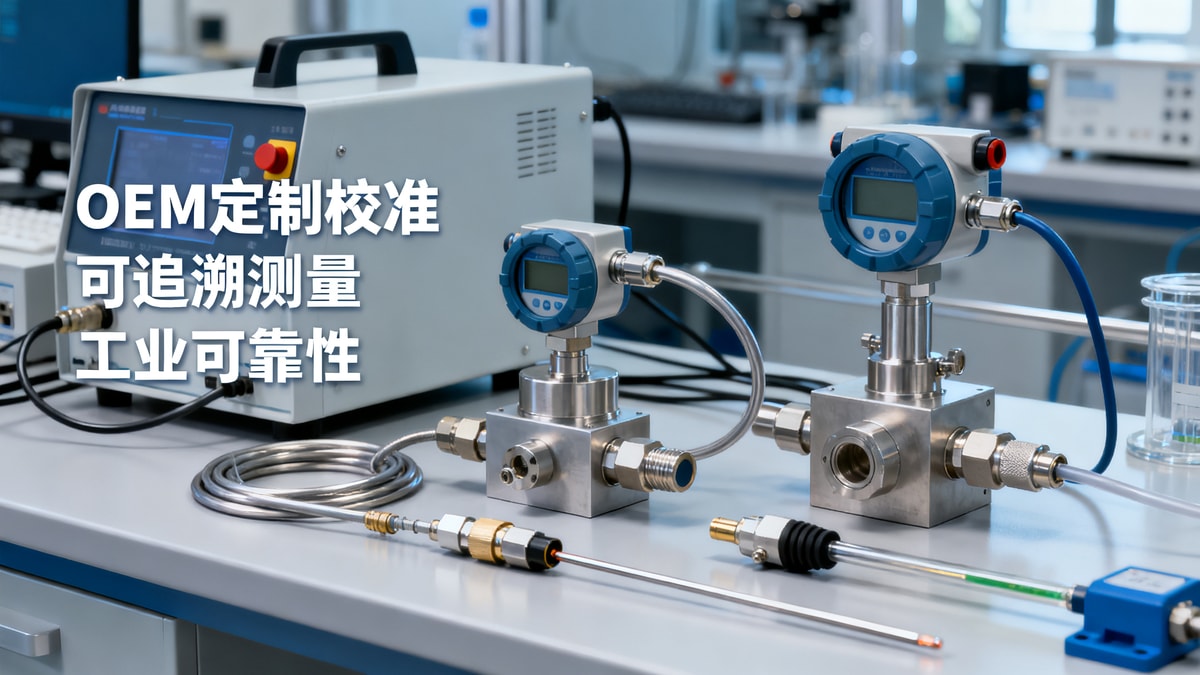

Modern Environment & Ecology monitoring systems now combine multi-parameter sensors, onboard diagnostics, edge computing, and cloud reporting. Yet technical evaluators still need a structured way to verify reliability across gas monitoring, particulate measurement, water quality testing, weather compensation, and data auditability. Accuracy is the result of system design, calibration discipline, installation quality, and maintenance strategy working together.

What accuracy really means in industrial Environment & Ecology monitoring systems

Accuracy in Environment & Ecology monitoring systems should never be treated as a single number. In practice, technical teams evaluate at least 4 dimensions: measurement error, repeatability, long-term drift, and system availability. A sensor may show strong initial precision, yet fail procurement expectations if recalibration is required every 30 days or if field uptime falls below 95%.

Core accuracy metrics technical evaluators should review

For air emissions and ambient monitoring, common accuracy expressions include ±0.1 mg/m³, ±2% of reading, or ±1 ppb depending on the analyte and method. For water quality systems, the evaluation may focus on pH accuracy of ±0.1, dissolved oxygen within ±0.2 mg/L, conductivity within ±1%, or turbidity within ±2% of reading. The right benchmark depends on the compliance threshold and process risk, not just the instrument brochure.

Repeatability matters because industrial decisions are often triggered by trend changes rather than one-time values. If a PM2.5 monitor reports 35, 39, and 31 µg/m³ over 10 minutes in a stable location, the issue may not be environmental fluctuation but weak signal stability. For many industrial buyers, a repeatability target within 1%–3% under stable conditions is more useful than broad headline claims.

Why stated accuracy and field accuracy differ

Laboratory verification is usually performed at controlled temperatures such as 20°C–25°C, stable humidity, and clean power supply. Industrial sites rarely offer those conditions. Near furnaces, substations, chemical storage, wastewater pits, or marine terminals, systems may face 10°C to 45°C temperature swings, 80%–95% relative humidity, corrosive gases, electromagnetic interference, and intermittent vibration. These conditions can shift zero points, delay response time, and accelerate sensor aging.

The table below gives a practical framework for evaluating how accuracy claims should be interpreted during technical review and vendor comparison.

The key conclusion is that modern Environment & Ecology monitoring systems can be highly accurate, but only when the claimed figures are tied to operating range, maintenance burden, and environmental tolerance. A narrow brochure value is less useful than a clear accuracy profile across the full lifecycle.

Which technologies influence measurement reliability the most

The performance of Environment & Ecology monitoring systems depends heavily on sensor type and signal processing architecture. Two systems may monitor the same pollutant but produce different results because they rely on different detection principles, compensation models, or sampling methods. For technical evaluators, understanding the underlying technology reduces the risk of comparing unlike systems as if they were equivalent.

Common sensor technologies and their field behavior

Electrochemical sensors are widely used for gases such as CO, H2S, SO2, and NO2 because they offer compact form factors and fast response, often within 20–60 seconds. However, they can be sensitive to cross-interference and may require more frequent replacement in harsh industrial settings. Optical methods, including non-dispersive infrared and UV absorption, often deliver stronger stability for certain gases but may involve higher capital cost and more demanding optical path maintenance.

For particulate matter, light-scattering sensors are efficient for trend monitoring and distributed networks, yet they can overread or underread when aerosol composition changes. Beta attenuation or gravimetric reference methods are generally more robust for compliance-grade use, though they are larger, slower, and costlier to deploy. In water systems, digital smart probes improve traceability, but membrane fouling, scaling, and biofilm formation still affect long-term accuracy.

Signal conditioning and compensation logic

Modern systems increasingly rely on temperature compensation, humidity correction, pressure normalization, and algorithmic filtering. These features can improve field accuracy by 5%–20% depending on application, but they also create a new procurement question: are the corrections transparent and documented? If a vendor cannot explain how compensation is applied, technical teams may struggle to validate alarm thresholds or compare results against laboratory references.

- Check whether sampling is direct, extractive, or diffusive.

- Confirm response time at both low and high concentrations.

- Review cross-sensitivity lists for chemically similar compounds.

- Verify sensor replacement cycles, often 6–24 months depending on technology.

In technical evaluations, a lower-cost sensor with quarterly recalibration and annual replacement may be less economical than a premium platform with 12-month stability. This is especially true for large industrial estates where 20, 50, or 100 distributed monitoring points can multiply maintenance labor.

Calibration, verification, and data integrity determine whether accuracy is sustainable

Even the best-designed Environment & Ecology monitoring systems lose value if calibration is inconsistent or data records cannot withstand audit review. In most industrial settings, long-term reliability depends on a disciplined combination of commissioning checks, scheduled calibration, reference comparison, and tamper-resistant data handling.

A practical calibration framework for industrial buyers

A common implementation model includes 3 stages: factory calibration, site acceptance verification, and periodic field recalibration. Factory calibration confirms baseline performance, but site acceptance is where installation errors often appear. Problems such as sample line leakage, wrong sensor height, poor shielding, or power noise can produce deviations before operations even begin.

For many air and water monitoring applications, technical teams should expect a calibration or verification cycle every 1 to 3 months for critical points, and every 6 to 12 months for more stable secondary parameters. The correct interval depends on contamination load, sensor chemistry, and compliance exposure. Shorter intervals may be justified where exceedance penalties or shutdown risk are high.

The table below outlines a realistic review checklist that procurement and engineering teams can use when qualifying systems for industrial deployment.

Technical evaluators should note that calibration alone does not guarantee reliable reporting. Data integrity features such as checksum validation, encrypted transmission, local buffering, alarm logging, and user permission control are increasingly important. If a network outage causes 8 hours of missing data and no recovery buffer exists, the system may still be technically accurate but operationally unreliable.

Questions to ask before procurement approval

- What is the recommended calibration interval under heavy dust, salt, or chemical vapor exposure?

- What happens to data during communication loss lasting 2, 6, or 24 hours?

- Which maintenance tasks require trained technicians rather than local operators?

- Can the system provide traceable logs for alarm edits, calibration events, and firmware changes?

Environmental variables that most often reduce field accuracy

In real industrial settings, the largest accuracy losses often come from environmental interference rather than sensor defects. Environment & Ecology monitoring systems installed near stacks, cooling towers, wastewater basins, loading bays, crushers, or substations must handle rapidly changing conditions that do not exist during controlled testing.

Temperature, humidity, dust, and vibration

Temperature swings of 15°C–25°C within a single shift can affect sensor baselines, especially in low-concentration gas monitoring. High humidity can create condensation, which is particularly problematic in optical chambers and sample tubing. Dust loading may block inlets, coat optics, or contaminate membranes. Persistent vibration from pumps, compressors, or rotating equipment can also weaken connectors and alter sampling stability over time.

Installation decisions that improve reliability

- Use weather shielding and thermal management where solar loading is high.

- Keep sample lines as short as practical to reduce lag and adsorption effects.

- Separate signal wiring from high-voltage lines to limit electrical noise.

- Define inspection points every 30–90 days based on site severity.

For technical evaluators, this means system accuracy should be validated as an installed solution, not just as a sensor package. A well-specified monitor can still underperform if enclosure ventilation, grounding, mounting height, or sampling geometry are wrong. In many projects, installation quality accounts for a meaningful share of performance variation during the first 6 months.

How to choose the right system for compliance, risk control, and procurement value

When comparing Environment & Ecology monitoring systems, the best choice is rarely the one with the lowest headline error or the lowest upfront price. A stronger procurement decision balances at least 5 factors: measurement fit, regulatory relevance, lifecycle maintenance, data transparency, and service responsiveness. This is especially important for multinational facilities operating under CE, UL, ISO-aligned internal controls, or regional emissions and discharge requirements.

A decision model for technical evaluators

First, map each monitoring point to its function: compliance reporting, process optimization, early warning, boundary surveillance, or worker safety support. A compliance-critical point may justify a reference-grade system with tighter drift control and quarterly validation. A secondary trend point may accept broader tolerance if it reduces deployment cost across 40 or more locations.

Second, compare total operating burden over 12 to 24 months. Systems with lower consumable usage, modular replacement design, and remote diagnostics can reduce field visits significantly. Third, review vendor support capability, including commissioning assistance, spare parts lead time, firmware update management, and escalation response within 24–72 hours for critical alarms.

Common evaluation mistakes to avoid

- Approving systems based only on nominal accuracy without drift data.

- Ignoring maintenance hours per station per quarter.

- Overlooking data retention, buffering, and audit trail requirements.

- Using one technology standard for all environments without site-specific review.

Modern Environment & Ecology monitoring systems are capable of strong accuracy, often fully suitable for industrial compliance and operational decision-making. But dependable performance is not created by sensors alone. It comes from matching the right detection method to the application, validating installation conditions, enforcing calibration discipline, and protecting data integrity from collection through reporting.

For technical evaluators, the most reliable systems are those that remain stable under real site stress, provide traceable maintenance logic, and support practical lifecycle control rather than one-time acceptance testing. If your team is assessing new monitoring deployments or upgrading legacy infrastructure, Global Industrial Core can help you compare options with a sourcing and technical lens aligned to industrial risk, compliance pressure, and long-term asset performance.

Contact us to discuss your operating conditions, request a tailored evaluation framework, or explore more Environment & Ecology monitoring systems solutions for high-stakes industrial environments.

Technical Specifications

- Hidden Environment & Ecology cost after project approvalAuthor :Environmental Engineering Director

- When does an Environment & Ecology impact assessment need updating?Author :Environmental Engineering Director

- Security & Safety standards that affect product approval speedAuthor :Safety Compliance Lead

Expert Insights

Chief Security Architect

Dr. Thorne specializes in the intersection of structural engineering and digital resilience. He has advised three G7 governments on industrial infrastructure security.

Related Analysis

- May 07, 2026Hidden Environment & Ecology cost after project approvalAuthor :Environmental Engineering Director

- May 07, 2026When does an Environment & Ecology impact assessment need updating?Author :Environmental Engineering Director

- May 07, 2026Security & Safety standards that affect product approval speedAuthor :Safety Compliance Lead

Core Sector // 01

Security & Safety